Quantitative fault trees can be evaluated to compute the top event unavailability, unreliability, conditional failure intensity as well as the importance of individual events. The mathematical background and formulas are described in the Ansys medini analyze User Guide in the section "Analysis of Fault Trees". This section summarizes potential pitfalls when using the tool and interpreting the results.

The evaluation of a fault tree can be triggered on any event of the fault tree and is a manual step. The tool has two distinct computations for the top and intermediate event probabilities:

Computing the probabilities at mission time T as specified at the project model and showing them on the diagrams. This is triggered using the "Calculate probabilities" context menu entry. All events in the subtree of the selected event are updated as shown in the diagram(s).

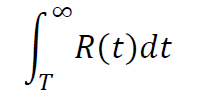

Evaluation over a mission time T to compute unavailability, unreliabiliy, etc. considering the full time interval [0...T]. This evaluation is triggered using the context menu action "Evaluate Fault Tree...".

NOTE [10] — The graphical editor always shows the probability of occurrence (unavailability) and failure frequency at a specific mission time T. This value can be different from a full evaluation shown in the cut set editor, since here aggregated values over the whole mission time [0...T] are computed. Note further that latter evaluation can use a custom value entered into the dialog when triggered.

RECOMMENDATION [10] — The calculation should always be triggered on the top level event to have the whole tree consistent. For R19.2 and earlier releases: If multiple models are used, the mission time should be set to the same value to avoid confusion in the graphical visualization.

Primary events can derive their probability from SysML elements and safety mechanisms, converting the failure rate and/or diagnostic coverage into a probability for the event.

WARNING [10] — The fault tree evaluation always uses the last status of failure rates that was computed. To make sure these numbers are up to date, users need to trigger a recomputation of failure rates before evaluating a fault tree.

RECOMMENDATION [11] — Before triggering the fault tree evaluation, users shall make sure that all failure rates are up to date. If starting a fault tree evaluation and analysis of its results, users shall recompute failure rates to guarantee that the fault tree uses current values.

Time-dependent probabilities such as monitored events, repairable events, and events with a Weibull distribution (non-exponential case) might lead to decreasing and non-continuous distribution functions. It should be ensured that the mission time must be at least long enough for these events to manifest in the overall computation. This is especially true for monitored events which lead to a "sawtooth curve" for the unavailability.

Moreover, to get accurate results, the integration step width parameter needs to be set to an appropriate value. The integration step width determines the points to be computed for the numerical integration. In addition, the first interval between [0..step-width] is calculated with higher resolution since its contribution for repairable events and certain Weibull settings is disproportionally high, especially for small mission times.

RECOMMENDATION [12] — The integration step width should be adjusted according to the test interval for monitored/latent events. In general, the step width should not be too large since it could lead to coarse-grained approximations of integrals in certain cases.

NOTE [11] — The maximum resolution for integrals is currently 1h (i.e. step width for the integration). Note that this means monitored events with a test interval of less than 1h will appear as if their probability is constant.

NOTE [12] — Note that the tool uses as default internally Binary Decision Diagrams (BDD) to compute the quantitative figures and not the minimal cut sets. That means the precision of the results is independent of the cut-off settings for the cut set extraction. Even with setting cut set order to 0, the results will be exact.

Beginning with version 2022 R2 a second option is available to truncate the fault tree computation and use approximations of the results using minimal cut sets. For more information, see the Ansys medini analyze User Guide. This computation does not use BDD and provides best effort approximations. These can vary depending on the truncation cut-off (i.e. number of events in a cut set).

The probability calculations internally use a high precision (resolution is E-22) which should not lead under any circumstances to negative effects for the results. Rounding is applied in a conservative way (rounding up).

WARNING [11] — The mean time between failures (MTBF) is calculated by evaluating 1/w(t*) for a very large t*. This is generally a good approximation, but it can introduce non-negligible errors in cases, such as when w(t) is periodic or a sawtooth curve, as can happen in the presence of monitored events.